Secure Offline RAG System

Client : Challenge Open Source

Langages : Python, LangChain, FAISS, Streamlit

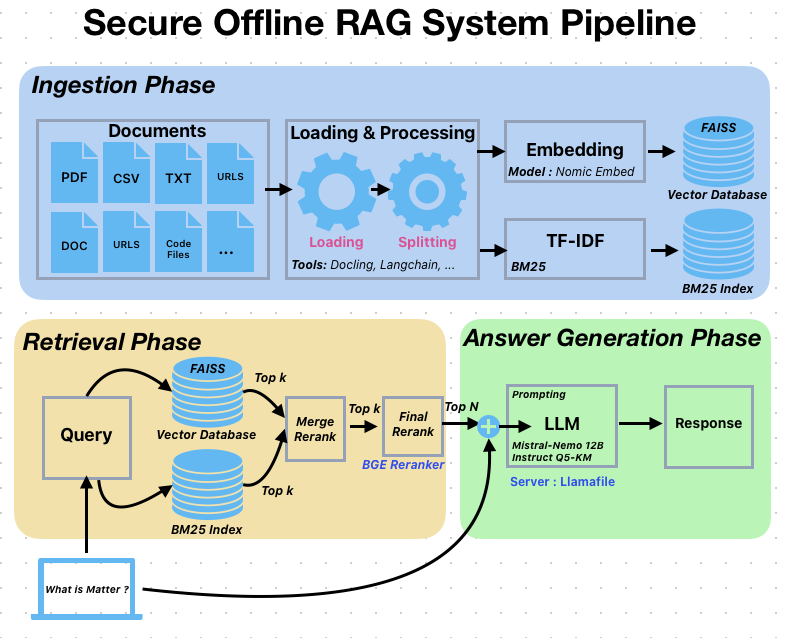

This award-winning Retrieval-Augmented Generation (RAG) system secured 1st place in the Secure RAG Challenge by UnderstandTech, demonstrating excellence in building secure, offline AI solutions with emphasis on data privacy and open-source technologies. I architected a sophisticated multi-stage pipeline that combines advanced document processing, hybrid retrieval mechanisms, and optimized language model integration. The system leverages Docling for superior PDF-to-Markdown conversion, implements a dual encoding strategy using Nomic Embed for semantic understanding and BM25 with TF-IDF for keyword matching, and utilizes FAISS vector database alongside a BGE Reranker to ensure highly relevant results. Through Llamafile integration, I achieved flexible CPU/GPU performance optimization, enabling efficient inference while maintaining resource control—all tested and optimized on hardware configurations with 32GB RAM and 8GB VRAM.

I designed the system to handle diverse content types seamlessly, supporting text documents (Markdown, PDF, CSV, HTML), multiple programming languages (Python, JavaScript, Java, C++, Go, Rust, and more), and technical documentation with intelligent parsing that preserves header hierarchies and metadata. Key technical achievements include implementing an advanced caching system for optimized performance, GPU-accelerated embeddings with automatic memory management, thread-safe LLM operations, and a configurable processing pipeline with comprehensive logging and progress tracking. The solution features a user-friendly Streamlit web interface and emphasizes practical deployment considerations, making it suitable for production environments requiring secure, offline RAG capabilities with minimal computational overhead.